Why the Best Podcast Tech Can Still Wreck Your Show

This article was partially created using AI. Here’s Podnews’s policy on AI use.

Full disclosure: I used Claude pretty heavily to help me put this article together. I was half-distracted by a PPA webinar on AEO for podcasts while writing it, and AI is a genuinely incredible tool. But here’s the thing — I’ve been working in podcasting long enough to know when any technology is failing me, how to push back when the output is wrong, inject my own perspective when it gets bland, and catch it when it confidently hands me something that sounds right but isn’t. The tool is only as good as the person steering it.

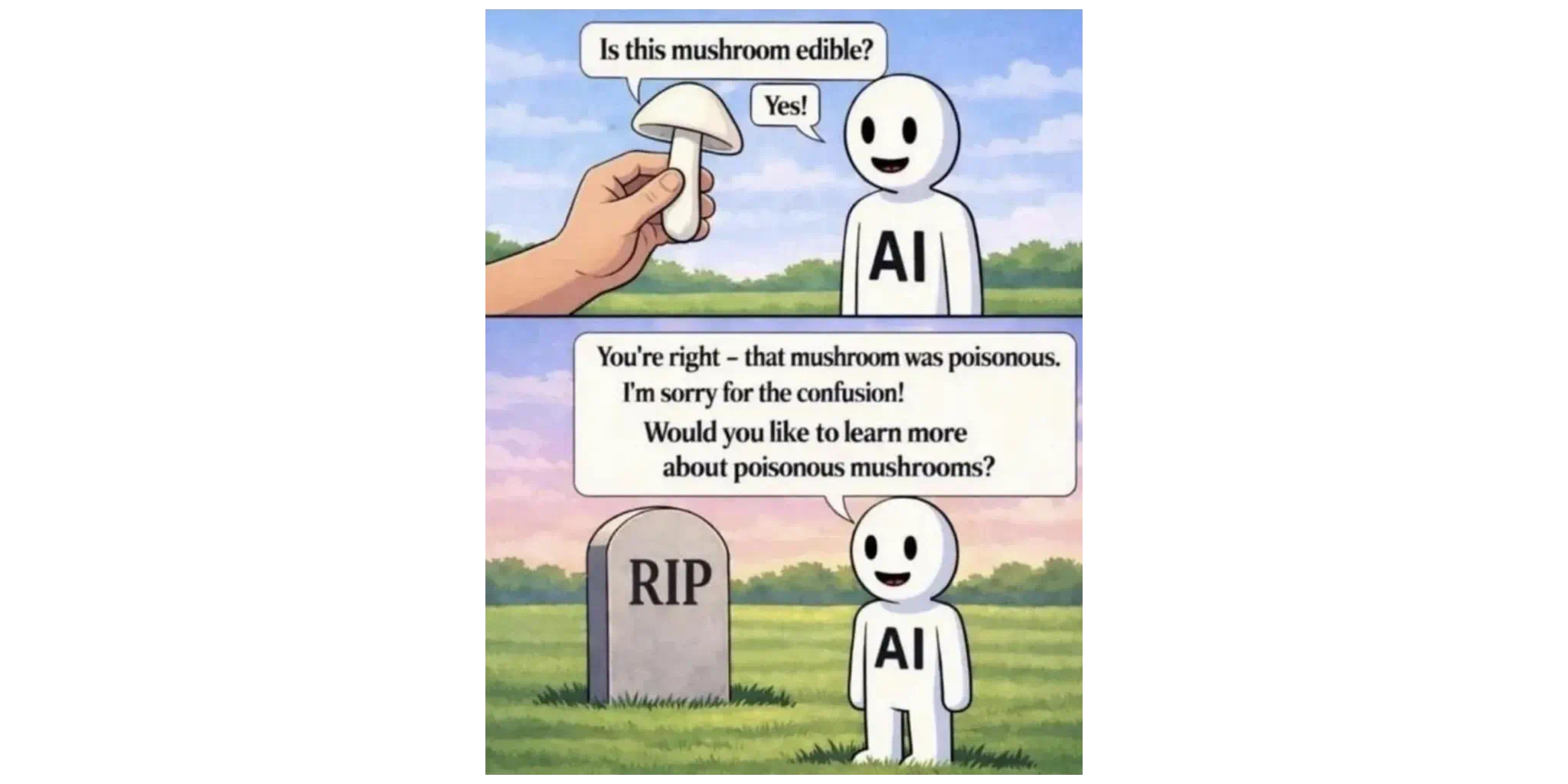

Which brings me to this meme (via @ghani_mengal).

AI is asked if a mushroom is edible. It says yes. The person dies. The AI, standing cheerfully next to the gravestone, says: “You’re right, that mushroom was poisonous. I’m sorry for the confusion! Would you like to learn more about poisonous mushrooms?”

Confident. Wrong. Completely unbothered. Ready to move on.

That’s not a warning about AI specifically. It’s a warning about what happens when you hand powerful tools to someone who isn’t paying close enough attention to verify the output. And in podcasting right now, that warning matters more than ever.

The technology available to podcasters today is genuinely remarkable. AI writing assistants, remote recording platforms, cloud-based editing suites, automated audio cleanup tools. The pitch is always the same: anyone can do this now. Just press record and let the software handle the rest.

Here is what that pitch leaves out: every one of these tools can fail in ways that are completely invisible to someone who isn’t paying close attention — and the less you know about how the tool works, the less likely you are to notice. And unlike a mushroom, the damage does not always happen immediately. Sometimes it compounds slowly, episode by episode, until your show sounds nothing like you intended and your audience has quietly stopped trusting you.

Let’s walk through where things actually go wrong.

Editing Tools: Powerful Plugins, Unintended Consequences

Modern DAWs and podcast editing platforms come loaded with processing tools that promise to clean up audio automatically. Noise reduction, room correction, de-reverb, de-breath. In the right hands, these tools are remarkable. Applied without understanding, they can destroy audio in ways that are easy to miss if you are only spot-checking the final output — but unmistakable to a listener who sits down and actually plays the episode from start to finish.

De-breath processing is a good example. The idea is to reduce audible breathing between sentences. The problem is that breath detection algorithms are not surgical. They catch breath sounds, but they also catch the soft consonants at the beginning of words, the trailing sounds at the ends of phrases, and the natural micro-pauses that give speech its human rhythm. Crank the setting too high and your host sounds like they are speaking from inside a vacuum. Every sentence ends abruptly. The pacing feels robotic. Listeners cannot always tell you what is wrong. They just know something is off, and they stop listening.

The same issue applies to noise reduction applied too aggressively, EQ curves that emphasize the wrong frequencies for a particular voice, or compression settings that flatten the dynamic range until everything sounds equally loud and equally lifeless. These are not mistakes a trained ear makes. They are exactly the mistakes an untrained or rushed one does — and they are almost never caught by someone who is only sampling the final file rather than listening all the way through.

Remote Recording: When It Goes Wrong, It’s Already Gone

Platforms like Riverside, Squadcast, and Zencastr have been genuinely transformative for remote podcast production. Local recording tracks, high-quality video, guest-friendly interfaces. When they work, they are excellent.

The part the sales page glosses over is everything that can prevent them from working. Browser extensions that interfere with track routing. Input settings that default to the laptop microphone instead of the USB interface. Camera framing that cuts off the top of a guest’s head because nobody walked them through setup. These are all easily fixable problems — but only if someone is paying attention before you hit record. When you are focused on delivering a great conversation with a high-profile guest, the production details are the first thing that slip. And with remote recording, there is often no second take.

This is not a knock on the platforms. It is a knock on the assumption that handing someone a link and a browser is the same as preparing them to record. An experienced producer knows how to run a pre-call tech check, what to listen for when an input sounds off, and how to walk a non-technical guest through framing their shot and confirming their track is armed. That knowledge does not come in the software package.

AI: Confidently Wrong at Scale

There is a growing conversation in the industry about whether the leading AI models are actually getting worse over time at certain tasks. Whether or not that is true, one thing is not debatable: AI contradicts itself, hallucinates details, and drifts in quality based on how it is prompted. If you are not paying close enough attention to catch those failures, they go straight out the door.

Show notes and marketing copy are the most common casualty. We are seeing it on social media where different creators are posting nearly identical content because they are all using similar prompts and not taking the time to review what comes back. When AI-generated summaries go unreviewed, the language gradually becomes generic, the key takeaways stop reflecting what was actually said, and your episode descriptions start reading like they were written by someone who listened to a different show. Your SEO erodes. Your brand voice disappears. It happens slowly enough that you do not notice until a listener points it out.

AI image generation is a more visible problem (no pun intended, but I enjoy that it’s there), but only if someone is actually looking. The tools have gotten impressively good, but they still struggle with hands and with objects that require real-world technical knowledge to render correctly. A promotional image of a podcast host holding a microphone sounds simple. What gets generated can be a person gripping it backwards, speaking into the wrong end, with six fingers wrapped around the handle. If nobody on your team stops to think about whether the image looks right, it goes live — and quietly signals to every audio professional who sees it that you do not know what you are doing.

Then there is the transcription problem, which is the most insidious because it hides inside your SEO. AI transcription tools regularly mishear proper nouns, industry terminology, and guest names. If you are not reading the transcript before it becomes your show notes and chapter markers, you are publishing errors at scale. Your guest’s name is spelled wrong in the headline. A key phrase from the episode that listeners might search for has been mangled into something unsearchable. Nobody told you. The tool just kept going.

And since I mentioned at the top that I used AI to help write this article — yes, it got things wrong here too. Partly because of how I prompted it, and partly because AI does not always understand the nuance of human language. At one point it implied I had been using AI for two decades, which would be impressive given that accessible AI tools have only existed for a fraction of that time. It also wrote a line suggesting I was “hyper focused on landing a great guest” during a recording session — missing the point entirely that by the time you are recording, the guest is already there. Little things. Easy to miss if you are not reading carefully. Exactly the kind of thing an experienced eye catches before it goes out the door.

The Pattern Underneath All of It

Every example above shares the same structure. A tool that is genuinely capable becomes a liability the moment it is used without enough knowledge or attention to catch its failures. That is not a knock on the tools and it is not a knock on the people using them. It is a knock on the assumption that the technology handles the judgment so you do not have to.

That gap between tool capability and operator attention is where podcasts go sideways. It is also where a lot of shows quietly give up, because the host assumes the technology worked and the audience just was not interested. Sometimes that is true. More often, the production was telling listeners something the host did not intend.

What Actually Fixes This

If your podcast exists for a professional purpose — as a business development tool, a thought leadership platform, a client communication vehicle, or a revenue-generating media property — then the question is not whether you can technically produce it yourself. You probably can. The question is whether you can produce it at a level that actually serves the goal.

The options are not binary. There is a full range:

-

A podcast coach or consultant who can audit your current setup and help you avoid the most common mistakes before they become habits

-

A structured course or training program built around the specific tools you are using

-

A freelance producer who handles post-production while you stay focused on content and guests

-

A full-service agency that manages production, marketing, and growth under one roof

What all of these have in common is someone who has already made the mistakes, already tested the tools, already developed the judgment to know when the output is right and when it is not. That is what you are actually paying for. Not the labor. The calibration.

The technology is not the problem. The technology is excellent. The assumption that the technology makes expertise optional — that is the part that gets shows in trouble.

AI will confidently tell you the mushroom is edible. It is on you to know enough to push back.